The End of Cheap AI Tokens

📧 Subscribe to JavaScript Insights

Get the latest JavaScript tutorials, career tips, and industry insights delivered to your inbox weekly.

Last month I hit my Claude usage limit at 2 PM on a Tuesday. I was in the middle of debugging a WebSocket connection in a Next.js application, and the conversation was getting long. The context window was full of code, error logs, and back-and-forth about edge cases. I clicked send and got a message I had never seen before: "You have reached your usage limit. Please wait or upgrade to continue."

I am paying $20 a month. I have been paying $20 a month since Claude Pro launched. For that price I got access to one of the most intelligent systems ever built, and I used it every single day to write code, review pull requests, debug production issues, plan architecture, and draft content for my job board. Twenty dollars for what felt like having a senior engineer sitting next to me around the clock.

That era is ending. And if you are a JavaScript developer who has built your daily workflow around Copilot, Claude, Cursor, or any other AI coding tool, what is happening right now in the pricing models of these companies will change how you work within the next six to twelve months.

I am not writing this as a think piece about the future. I am writing this because I see it already affecting developers who message me through my job board. The ones who built their speed and confidence on AI assistance are starting to hit walls. And most of them do not understand why.

Why Twenty Dollars Was Never a Real Price

The $20 monthly subscription for Claude Pro, ChatGPT Plus, and similar tools was never sustainable. It was a customer acquisition strategy, the same way Uber spent billions on below-cost rides to build a user base. The AI companies were losing money on every heavy user from the first month.

Here is the math that most developers never think about. When you send a complex coding query to Claude with a long conversation history, you are consuming what the industry calls "tokens." Each token requires milliseconds of GPU time on hardware that costs thousands of dollars per hour to run. A single deep reasoning request on a model like Claude Opus can cost the company $0.30 to $0.50 in compute. If you make 40 of those requests in a day, which is normal for a developer in an active coding session, you have burned through $12 to $20 in a single day. Your entire monthly subscription gone in one afternoon.

The companies covered this gap with venture capital. Anthropic raised $25 billion from Amazon. OpenAI raised $13 billion from Microsoft. That money subsidized your $20 subscription so you would build habits around the tool, become dependent on it, and eventually accept the real price when it arrived.

The real price is arriving. OpenAI now charges $200 per month for ChatGPT Pro. Anthropic introduced Claude Max at $100 to $200 per month. Microsoft is moving GitHub Copilot toward token-based billing where you pay for what you use rather than a flat monthly fee. The direction is clear: the flat-rate all-you-can-eat model is being replaced by metered pricing, the same way you pay for electricity or cloud computing.

What Changed in 2026 That Made This Inevitable

Three things converged this year that made cheap AI tokens impossible to sustain, and all three are accelerating.

The first is model complexity. The AI models you use today are fundamentally more expensive to run than the ones you used two years ago. Claude in 2024 was fast and relatively cheap to serve. Claude in 2026 uses "reasoning" architectures that generate thousands of hidden tokens before producing your answer. You do not see these tokens. They do not appear in your chat. But the company pays for every single one of them. When you ask Claude to debug your React component, it might generate 3,000 tokens of internal reasoning before giving you a 500-token response. You see 500 tokens. The company pays for 3,500.

The second is compute scarcity. There are not enough GPUs in the world to serve every developer who wants AI assistance at current prices. NVIDIA cannot manufacture chips fast enough. Data centers cannot get enough electricity. Cities are literally refusing to grant power permits to new AI facilities because they would drain the local grid. This is not a temporary supply chain issue. This is a fundamental resource constraint that will last years. When supply is scarce and demand is exploding, prices go up. That is not greed. That is physics.

The third is the rise of AI agents. Tools like Claude Code, Cursor Agent Mode, and GitHub Copilot Workspace do not make one request at a time. They make hundreds. When you ask an AI agent to "refactor this module and update all the tests," the agent might make 50 to 200 API calls behind the scenes, each consuming tokens, each costing money. One developer running an AI agent for an hour can consume more compute than a hundred developers using basic chat. The companies cannot absorb this cost at $20 per month. It is not possible.

How This Is Already Hitting JavaScript Developers

I run a JavaScript job board. I talk to developers every day. Here is what I am seeing that most tech publications are not covering.

Developers who built their entire workflow around AI-assisted coding are experiencing what I call "capability withdrawal." They got fast. They got confident. They shipped features that used to take three days in three hours. Their managers noticed and adjusted expectations upward. Now the AI tools are throttling them mid-sprint, and they cannot go back to their old speed because they have not maintained the muscle memory for manual coding. I wrote about this problem of skill decay in AI-heavy roles just last week, and the response told me this is hitting harder than anyone expected.

Junior developers are in the worst position. Many of them learned to code with AI from day one. They have never debugged a complex React component without Copilot suggesting the fix. They have never traced a memory leak through a Node.js application by reading stack traces manually. When their AI tokens run out or their company downgrades the AI subscription to save costs, these developers are exposed. Their apparent skill level drops overnight because a significant part of their skill was never theirs. It was the AI's.

Senior developers are experiencing a different kind of pain. They can code without AI. They have the fundamentals. But they built speed assumptions around AI assistance that are now baked into project timelines and sprint commitments. When GitHub Copilot starts charging per token instead of a flat $19 per month, the cost of maintaining that speed becomes visible on the company's bill. And companies are starting to ask: is this developer fast because they are good, or fast because we are spending $500 a month on their AI tools?

This question is showing up in job postings on my board. Companies are starting to specify "AI-native workflow required" not as a perk but as a cost calculation. If they are going to pay for your AI tokens, they want to know you are using them efficiently. "AI-native" in a job posting increasingly means "we are budgeting AI compute per developer and we need you to stay within that budget."

The New Economics of Being a Developer

Think about this for a moment. For the last two years, the cost of being a productive JavaScript developer was essentially fixed: your salary, a laptop, maybe some SaaS subscriptions. AI tools were either free or $20 a month. The marginal cost of asking AI for help on any coding task was zero.

That is changing to a model where every question has a price. Every debugging session has a meter running. Every refactoring request burns tokens that show up on a bill. This is not speculation. Microsoft already showed the prototype: Copilot usage dashboards for engineering managers that show token consumption per developer per week.

Imagine your manager reviewing a dashboard that says: "Developer A used $340 in AI tokens this month. Developer B used $89. Both shipped the same number of features." What conversation do you think happens next?

This is going to change how developers think about AI assistance in the same way metered internet changed how people thought about downloading music in the early 2000s. When it was unlimited, you downloaded everything. When it was metered, you became selective. Developers will start asking themselves: "Is this problem worth burning $2 in tokens, or should I spend 20 minutes solving it myself?"

And this selectivity will, paradoxically, make some developers better. The ones who learn to use AI strategically, for the problems where it genuinely saves hours, will get better ROI than the ones who use it as a crutch for every small task. Knowing when to ask AI and when to think for yourself is becoming an economic skill, not just a technical one.

What Nobody Is Talking About

Here is what I think about this situation that I have not seen anyone else say. The end of cheap AI tokens is going to split the JavaScript developer market into two tiers more visibly than any technology shift before it.

Tier one: developers at well-funded companies that can afford $200 to $500 per month per developer in AI tooling. These developers will continue to ship fast, use the most powerful models, and build increasingly complex applications with AI assistance. Their companies will pay because the ROI is clear: one developer with unlimited Claude access ships what two developers without it can produce.

Tier two: everyone else. Freelancers, developers at startups with tight budgets, developers in countries where $200 a month is a significant portion of their salary, junior developers who cannot justify the cost before they have proven their value. These developers will use free tiers, smaller models, and open-source alternatives. They will be slower. And the gap between the two tiers will grow every month as the models get better and more expensive simultaneously.

I live in Turkey. I see this split from a perspective that most tech writers in San Francisco do not have. For a developer in Istanbul, Bangalore, Lagos, or Sao Paulo, $200 a month for AI tools is not a "cup of coffee" expense. It is a week's rent. The geographic arbitrage that was supposed to make remote work fair is being undermined by AI pricing that assumes every developer lives in a high-income country.

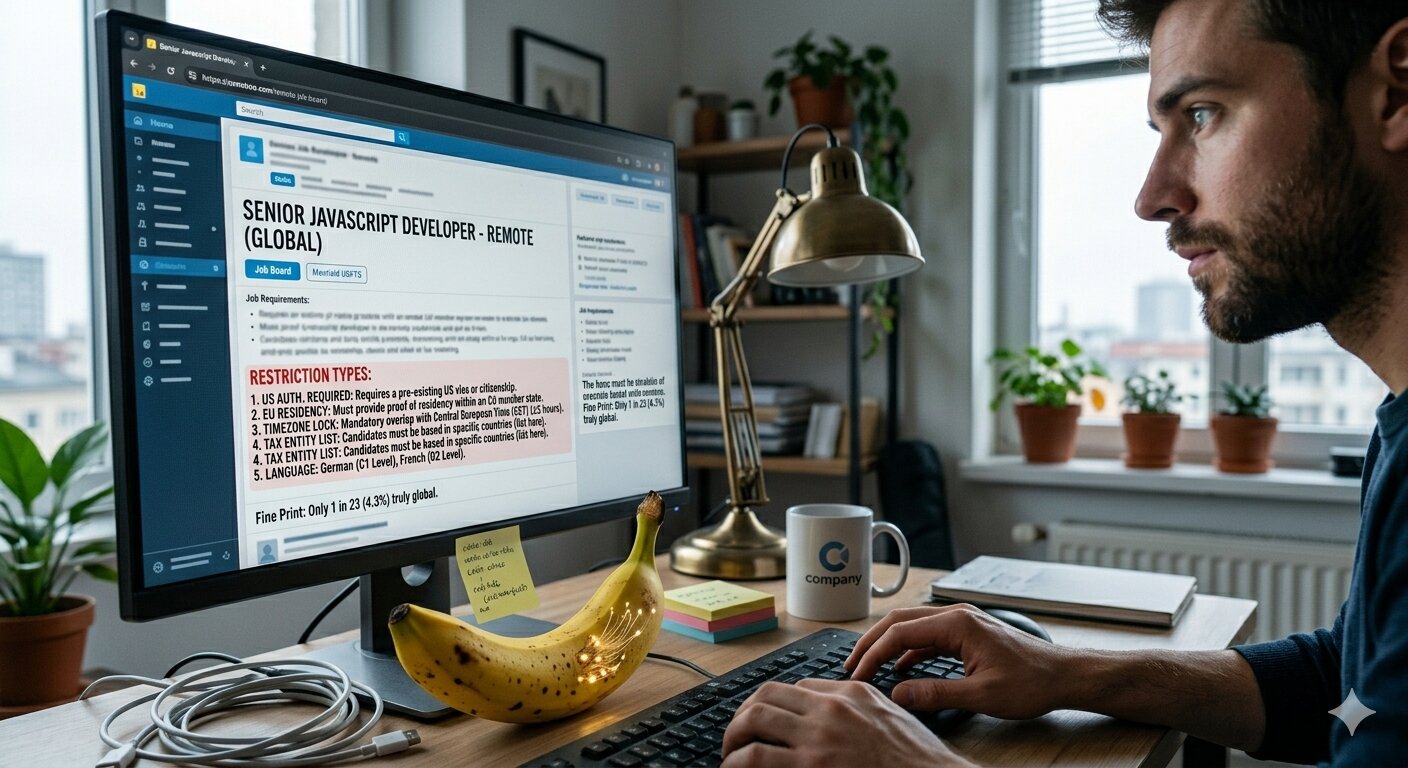

This connects directly to what I wrote about the hidden restrictions in remote JavaScript jobs. The remote job market already filters out developers by geography, timezone, and legal entity. Now it will filter by AI budget too. If two candidates apply for the same role and one has access to Claude Opus and the other is using the free tier of a smaller model, the productivity gap is visible from the first pair programming session.

What To Do About It

I am not going to pretend there is a cheerful solution to this. The economics are real and they are not going away. But there are practical steps that make sense if you are a JavaScript developer watching the price of your tools climb.

First, rebuild your ability to code without AI. Not because AI is going away, but because depending on a tool you might lose access to is a career risk. Spend 30 minutes a day solving problems without Copilot. Do it the way you used to. Feel the slowness. Rebuild the neural pathways. This is insurance, and it is free.

Second, learn to be efficient with tokens. Long, meandering conversations with Claude are expensive. Short, precise prompts with clear context get better answers and cost less. If you are sending your entire codebase as context when you only need help with one function, you are burning money. This is a skill that will matter more every year.

Third, look at open-source models seriously. Llama, Mistral, DeepSeek, and others are closing the gap fast. Running a local model on a decent laptop is already viable for 60 to 70 percent of daily coding tasks. The top 30 percent still needs frontier models, but you do not need frontier intelligence for writing unit tests or generating boilerplate.

Fourth, if you are job searching, ask about AI tooling in your interview. "What AI tools does your team use and how are they budgeted?" This question tells you whether the company sees AI as an investment or an expense. Companies that budget AI per developer and track usage are going to optimize ruthlessly. Companies that provide unlimited access are either well-funded or not paying attention to the bill yet.

The era of unlimited AI for $20 was a gift from venture capitalists who were competing for market share. That competition is settling into an industry with real costs, real scarcity, and real pricing. The developers who understand this transition and adapt their skills and habits accordingly will be fine. The ones who assume cheap tokens are a permanent condition are going to have a very rough 2027.

Tokens are not magic. They are a physical resource generated by machines that consume electricity in buildings that cost billions to construct. The price you pay for them will eventually reflect that reality. It already is.

FAQ

Why are AI coding tools getting more expensive in 2026?

Three converging factors: model architectures now generate thousands of hidden reasoning tokens per request which multiplies compute cost, there is a global shortage of GPUs and data center capacity, and AI agent tools make hundreds of API calls per task instead of one. The $20 flat rate was subsidized by venture capital and was never sustainable.

Should I stop using AI coding tools to save money?

No. But use them strategically. Learn which problems genuinely benefit from AI assistance and which ones you can solve faster yourself. Short precise prompts cost less than long conversations. And rebuild your ability to code without AI so you are not dependent on a tool whose pricing you do not control.

Will open-source AI models replace paid tools like Copilot and Claude?

For 60 to 70 percent of daily coding tasks, local open-source models are already viable. But for complex debugging, architecture decisions, and deep reasoning, frontier models still have a significant advantage. The practical approach is to use free or local models for routine work and save paid tokens for problems that genuinely need the most powerful models.

Share this article